Available translations: French

At Les-Tilleuls.coop, we’re constantly striving to reduce the environmental footprint and hosting costs of the projects we work on (eco-design, GreenOps, and FinOps strategies…). We generally focus on optimizing production code and infrastructure, but the CI/CD pipelines used to build and deploy applications can also consume a lot of physical and financial resources. In addition, fast DevOps feedback loops improve the working conditions and efficiency of developers.

As the creator of API Platform and Symfony Docker, two popular free software projects, I’ve been working with our SRE team on improving the resources consumed in building, testing, and deploying projects using them… with impressive results! Although I’ve used these projects as examples in this article, the optimizations described can be applied to any project written in any language.

Symfony Docker is a popular installer and runtime for the Symfony web framework. As its name suggests, it uses Docker under the hood. The project provides a complete, optimized setup for creating new Symfony projects and running them locally, in CI/CD jobs, and in production. Symfony Docker allows running PHP projects without the need for any local dependencies except Docker itself.

The official distribution of API Platform uses a Symfony Docker derivative that provides an extra Next.js service, as well as a Helm chart for deploying API Platform projects to Kubernetes.

Storing Docker Compose Cache in GitHub Actions Thanks to Bake

Symfony Docker and API Platform both provide nice and native GitHub support. New projects can be created from template repositories, and a GitHub Actions workflow that builds and tests applications is shipped with default installations.

The GHA workflow uses Docker Compose to build the images, starts the project, then runs tests and linters in the containers.

Using Docker Compose in the GHA workflows is convenient and straightforward but causes a major performance issue: the build cache isn’t kept between runs! Indeed, neither Docker nor GitHub provide official actions to store Docker cache layers created by Docker Compose in the GHA cache. It is, however, possible to store the cache in a remote Docker registry, but this makes things more complex, and this strategy isn’t as fast as using the local GHA cache anyway.

BuildKit and Buildx have beta support for exporting cached build layers to the GitHub Actions cache, but this feature isn’t exposed through the Docker Compose CLI (yet?).

However, Docker recently launched Bake, an experimental high-level tool leveraging BuiltKit and Buildx to build Docker projects as quickly and conveniently as possible, and Bake exposes this feature! Bake also has an official GitHub action, maintained by Docker Inc.

Bake comes with its own configuration format relying on HashiCorp Configuration Language (HCL, expect some future blog posts explaining how to unleash the power of Bake with HCL, subscribe to my Mastodon or Twitter accounts to be notified!). But, fortunately, Bake also supports the Compose spec! That means that it should be possible to use the Bake Action to build API Platform and Symfony projects, and to reuse the cached layers build after build. And indeed, after some improvements to the Docker Compose files, this GitHub workflow works as expected:

on: [push]

jobs:

tests:

steps:

-

name: Checkout

uses: actions/checkout@v3

-

name: Set up Docker Buildx

uses: docker/setup-buildx-action@v2

-

name: Build Docker images

uses: docker/bake-action@v3

with:

load: true

files: |

docker-compose.yml

docker-compose.override.yml

set: |

*.cache-from=type=gha,scope=${{github.ref}}

*.cache-from=type=gha,scope=refs/heads/main

*.cache-to=type=gha,scope=${{github.ref}},mode=max

-

name: Start services

run: docker compose up --wait --no-build

# Run your tests and lintersAfter getting the code and installing a recent version of Buildx, it builds the images using Bake.

The following Bake Action inputs are used to achieve our goal:

loadtells Bake to export the resulting images to the local Docker client, so they can be reused by Docker Compose.filesdefines the list of Docker Compose files from which image definitions are to be obtained.docker-compose.override.ymlmust be in the list because it contains definitions for the development images that we need to run the tests.- The

cache-fromandcache-tooptions hint Bake to use GitHub Actions to store and fetch cached layers. Themainbranch cache is always used, but the cache of other (feature) branches is scoped to prevent cache pollution problems. Themode=maxparameter tells Bake to store all layers in the GHA cache (by default, only final images are stored).

Reducing Docker Build Times

Before the optimizations described in this article, running the default GitHub Actions workflow on an empty API Platform project took around 5.5 minutes. Most of the time (~5mn10) was spent building the Docker images used to run tests and various linters. Caching build layers has improved the situation considerably. Unfortunately, the cache sometimes expires. Also, even with a populated cache, some build steps were always redone. Finally, some people use CI systems other than GitHub Actions, and having fast builds for everyone, including users installing API Platform or Symfony locally for the first time, would be cooler.

Optimizing Cache Layers Thanks to Multi-Stage Builds

For a year now, Symfony Docker and API Platform have been using multi-stage Docker builds to provide different images for development and production environments. For instance, Xdebug, the famous PHP debugger, is available and ready to use in dev but isn’t included in the production image.

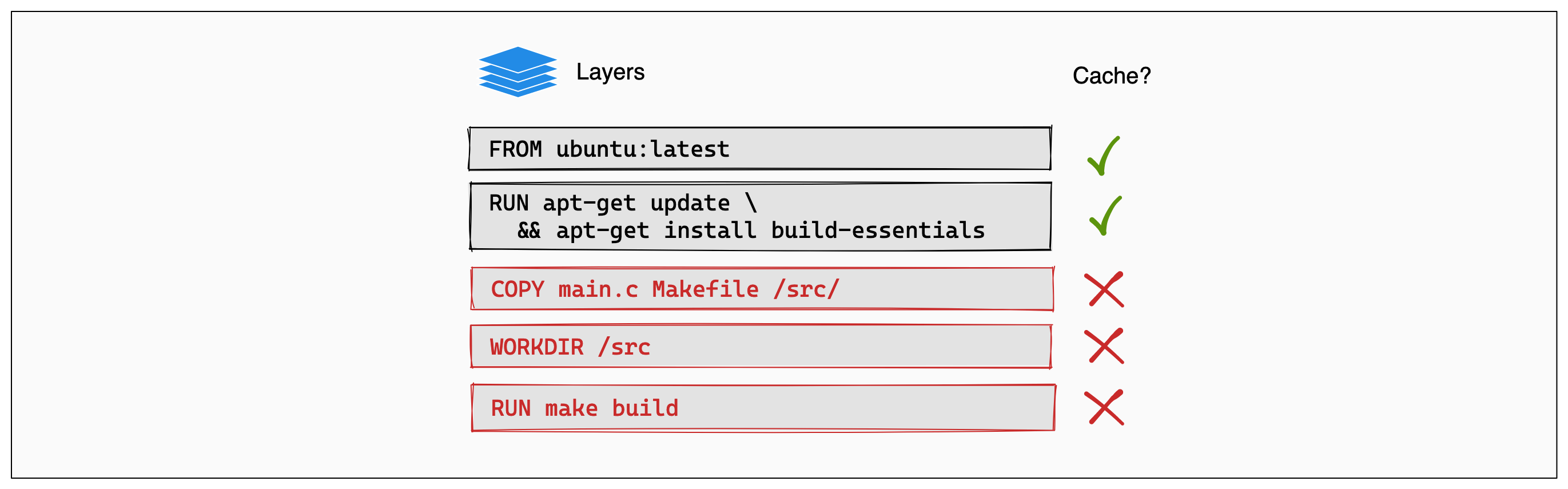

By analyzing the builds, we figured out that we could also take advantage of multi-stage builds to prevent the frequent invalidation of many Docker cache layers.

Each time a layer is modified, all subsequent layers are discarded. Therefore, copying the source code of an application will trash all subsequent layers. Symfony Docker built the PHP development image on top of the production image. This was unfortunate as the production image obviously contains the application’s source code, so any modification to the application will require rebuilding the layers used for development (such as installing the Xdebug extension).

To make matters worse, both the Caddy image (which needed the static assets to serve) and the Next.js image provided by API Platform suffered from similar issues.

I made two changes to these images that dramatically improve cache dynamics as well as build times, even when the cache is fresh:

- I’ve introduced new “base” stages containing all the layers shared between production and development images, but not the source code. These base stages are inherited by two images: a production image (which contains the source code) and a development image (previously, the development image was extending the production image). This simple change avoids the need to rebuild the development images when the source code changes!

- In development, the source code is mounted in the image as volumes (volumes are defined in the

docker-compose.override.ymlfile we provide. The development images also included the source code, but it was useless because of this volume. The source code is no longer copied to development images, which speeds up builds even further. This step also unlocked some simplifications and optimizations specific to how Symfony Docker initially installs Symfony.

Downloading Caddy Instead of Building it

Both API Platform and Symfony Docker are using the Caddy web server (version 2.7 of which has just been released, I’m a big fan of this piece of software) with its Mercure (real-time capabilities) and Vulcain (effortless client-driven web API) modules. As these modules aren’t included in the official Caddy image, we were using the xcaddy image to build a version of Caddy containing them. This step alone was taking more than 2 minutes!

xcaddy requires a full Go build chain, to download all Caddy, Mercure, and Vulcain dependencies, and, finally, to compile the binary locally. But all these steps can be avoided by using the official Caddy download API which allows you to download pre-compiled Caddy binaries containing extra modules.

Our Dockerfile for Caddy now looks like that:

FROM caddy:2-alpine

ARG TARGETARCH

# Download Caddy compiled with the Mercure and Vulcain modules

ADD --chmod=500 https://caddyserver.com/api/download?os=linux&arch=$TARGETARCH&p=github.com/dunglas/mercure/caddy&p=github.com/dunglas/vulcain/caddy /usr/bin/caddyThere are two tricks to explain here:

- We use the

ADDinstruction to replace the Caddy binary in the official image with a binary containing the Mercure and Vulcain modules directly downloaded from the Caddy project servers. Thanks to this clever use ofADD, we don’t have to installcurlor any other additional command. - Caddy is written in Go, so we need to download a binary compiled for the CPU architecture we’re using. For instance, on Apple Silicon, we need a binary compiled for the arm64 architecture. Fortunately, Docker provides the

TARGETARCHARG which allows us to detect the architecture of the target platform and to pass it to the Caddy API (the “new” BuilKit backend is needed to benefit from this arg)!

Downloading the pre-built binary just takes 1.5 seconds.

From 6 Minutes to 40 Seconds

The cumulative result of all these optimizations is impressive: with a warm cache, the test workflow of an empty project using Symfony Docker takes 50 seconds to run, whereas it used to take almost 6 minutes!

Results are similar for API Platform: from 5 to 7 minutes to around 1 minute.

Migrating Your Existing Projects

While new API Platform and Symfony Docker projects will automatically benefit from these enhancements, existing projects will not.

Dockerfiles and Docker Compose definitions are part of the skeletons of new projects, not of the vendor libraries. They are designed to be modified by the end-users. You own these files, and you have to tweak them according to the needs of your projects. This means that to benefit from the changes described in this article, you’ll need to backport them to your existing projects.

Here is the diff for API Platform, and the one for the diff for Symfony Docker!

Call the Experts

If you’d like to speed up your applications and CI/CD pipelines, reduce your ecological impact, and/or your cloud bill, don’t hesitate to contact us. We’re sure we can find ways to optimize your projects!

If you like my work on free and open-source software or my writing, also consider sponsoring me on GitHub!

This topic will also be at the core of my keynote at the API Platform Conference on September 21 (in Lille and online). I’ll explain how these optimizations, coupled with FrankenPHP, deliver even better results while simplifying both compilation and deployment. It’s not too late to come!

amazing ! thank you